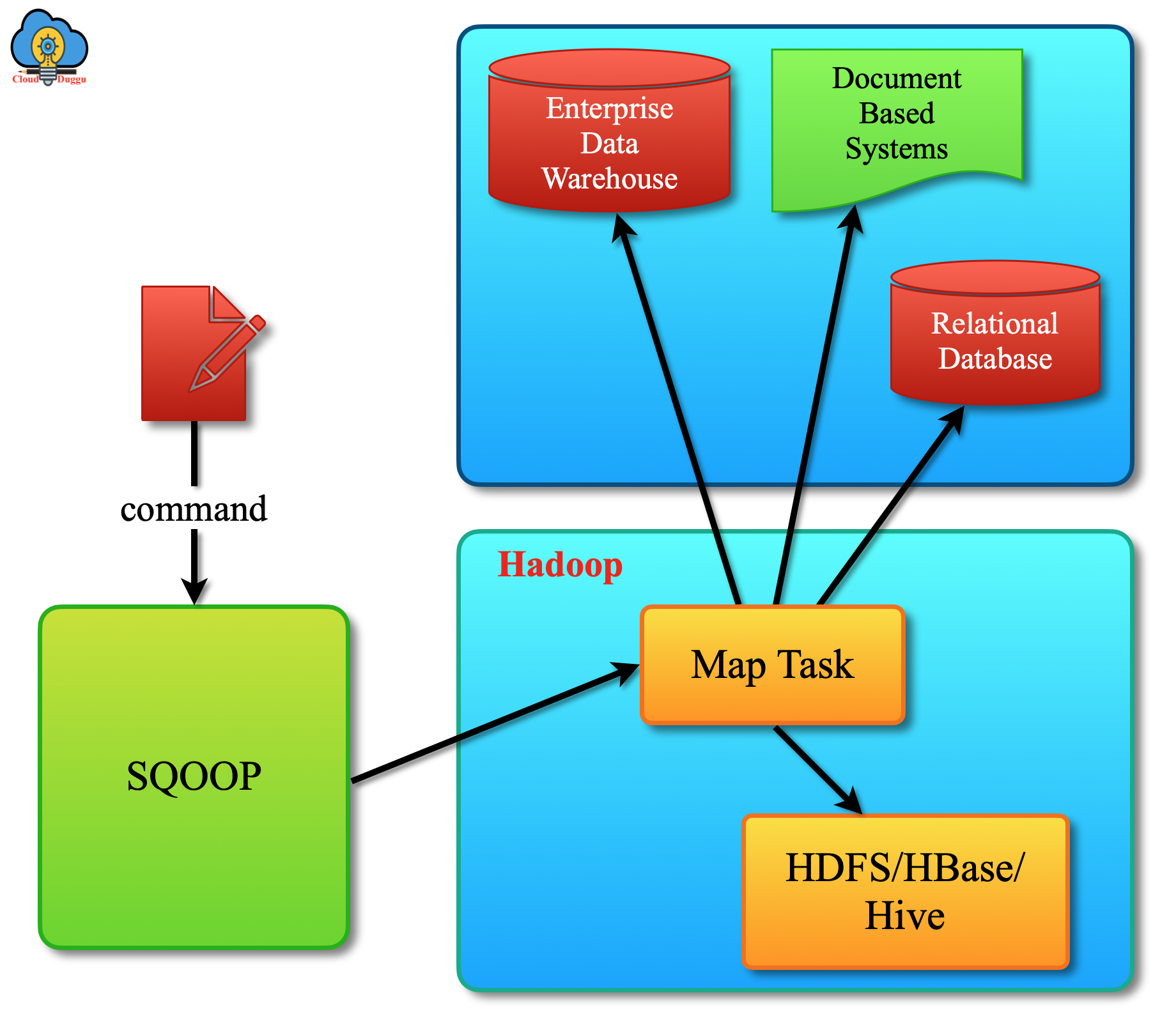

Apache Sqoop tool is developed to move data in between the RDBMS systems and Hadoop. Apache Sqoop tool is used by many organizations to transfer data from a relational database management system (RDBMS) such as MySQL or Oracle or a mainframe into the Hadoop Distributed File System (HDFS) and then it can transfer back from Hadoop HDFS to the RDBMS system.

Let us see Apache Sqoop architecture with the below graph.

Apache Sqoop is a very important Big data tool that is used by many organizations to perform the Import and Export of data from a Hadoop HDFS to the other relational databases and vice versa.

Let us see each tool in detail.

1. Import Tool

The Apache Sqoop Import tool is used to import the table from a relational database management system to the Hadoop HDFS. During the Apache Sqoop Import process, a row of the table is treated as an individual record in HDFS and stored as a text file or in other file formats such as Avro or SequenceFiles.

Let us see the functional flow of the Import tool using the below example.

Suppose we have an ORDERS table present in MySQL databases and we are importing all rows of this table in Hadoop HDFS with the below steps.

Step.1 In the First Step, Apache Sqoop examines the database to collect the required metadata information about the data that will import.

Step.2 In the second step, the map-only job uses the metadata information and transfer the data from the RDBMS system to Hadoop HDFS.

2. Export Tool

The Apache Sqoop Export tool is used to export a set of files from Hadoop HDFS back to an RDBMS. In the Export operation, the framework will read each file and parsed it into records, and then transfer those records to the target table of the database.

Let us see the functional flow of the Export tool using the below example.

We will use the ORDERS table to move data from Hadoop HDFS to MySQL database using the below steps.

Step.1 In the first Step Sqoop introspects the database to gather the necessary metadata for the data being exported.

Step.2 In this step Sqoop divides the input dataset into splits and then uses individual map tasks to push the splits to the database. Now Map task transfer the transaction and ensure less resource utilization and better throughput.

We will see each tool in detail in Import and Export section.