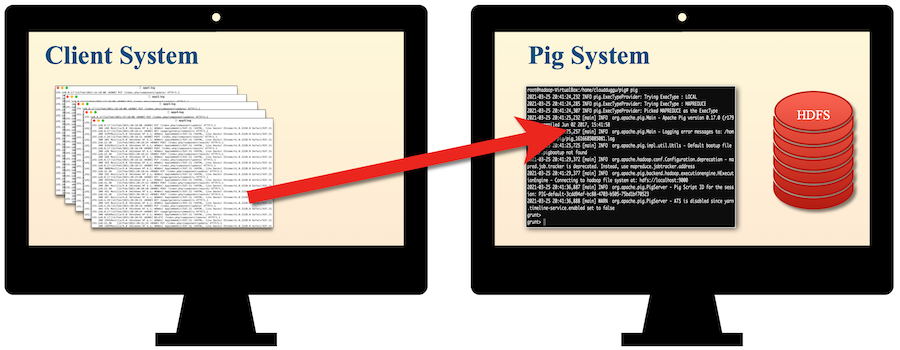

The objective of this tutorial is to create an Apache Pig project for the Application-URL data set in which we will take the Application-URL-Request data set from the client system and then process it in the Pig system. After processing of data from the Pig system, we will push the data to the client system and after that, we will show data in a tabular format.

So readers ... are you ready to create an Apache Pig project. (Application-URL-Stats)

1. Idea Of Project

| a. Fetch raw logs data from the Client System. |  |

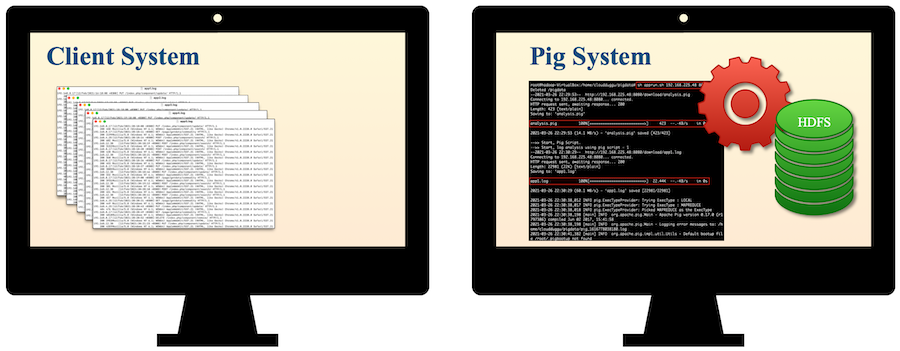

| b. Process raw logs data through the Pig System. |  |

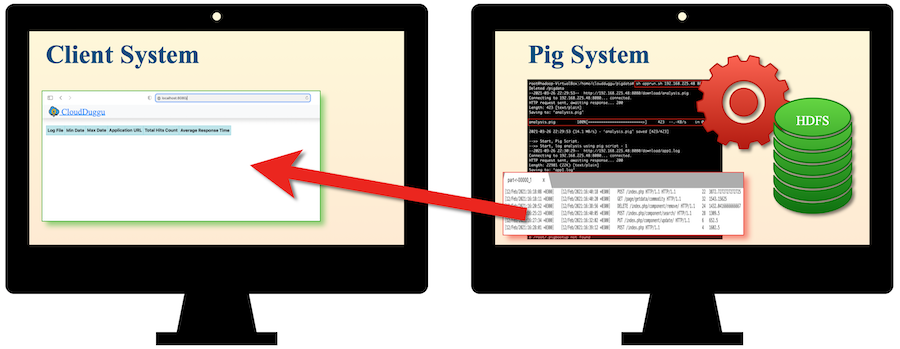

| c. Push result data from the Pig System to the Client System. |  |

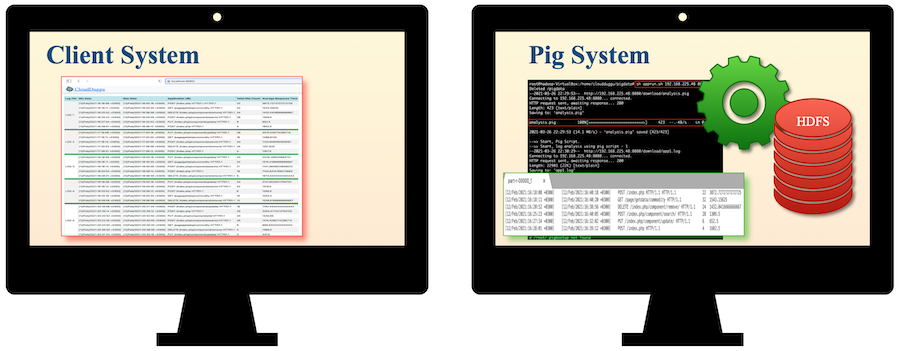

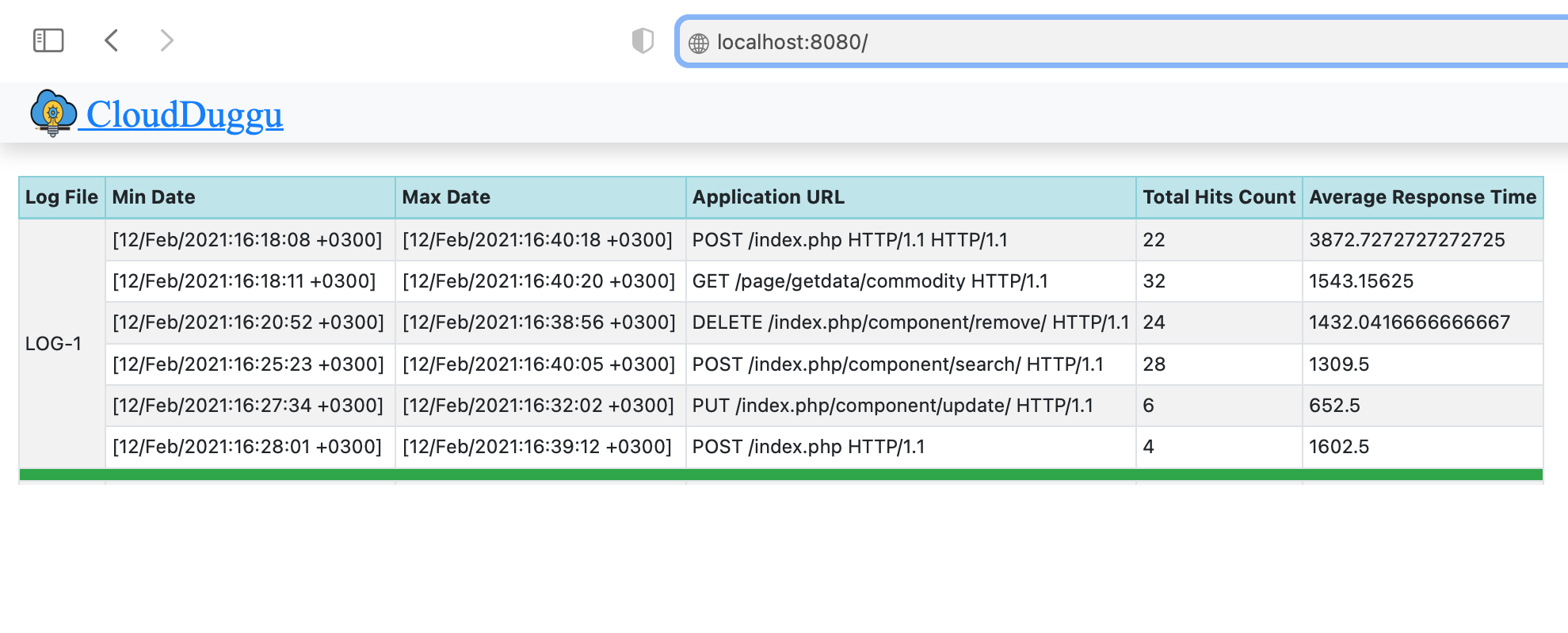

| d. Show result data in the Client System through tabular trends. |  |

2. Building Of Project

To run this project you can install VM (Virtual Machine) on your local system and configured Pig and Hadoop on that. After this configuration, your local system will work as a Client System and Pig VM will work as a Pig System. Alternative you can take two systems which are communicating with each other and on one of the system Pig is configured.

Let us see this project in detail and run it using the below steps.

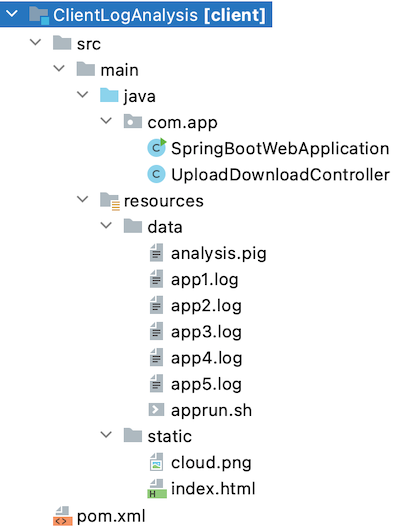

| a. The Client System | ||

|---|---|---|

| It is an example of the Spring Boot JAVA Framework. When we will build this project then it will create a "client.jar" executable file. |  |

|

| It has java code, data files, and static HTML pages. | ||

| Java code has 2 files, SpringBootWebApplication.java and UploadDownloadController.java | ||

| SpringBootWebApplication.java is the main project file, which is responsible for building code and running it on an embedded server. | ||

| UploadDownloadController.java is used to provide download & upload URL HTTP services. For downloading data files it uses the download client URL and for uploading result files it uses the upload client URL. | ||

| data folder has unprocessed raw logs files. (app1.log, app2.log, app3.log ...) | ||

| The Static folder has HTML page code (index.html). This is the main client view page which shows the Pig process result data. | ||

| pom.xml is a project build tool file. This file has java dependencies and builds configuration details. | ||

| For creating the “client.jar” file we use the command "mvn clean package". | ||

| Click Here To Download the "ClientLogAnalysis" project zip file. | ||

| b. Apache Pig System | ||

|---|---|---|

| Download apprun.sh shell script files. Which is used to process the pig script file continuously, on downloaded raw log files. | ||

| analysis.pig, this pig script file has a collection of pig quires for loading and processing raw log files. | ||

3. Run The Project

| a. Client System | b. Pig System | |

|---|---|---|

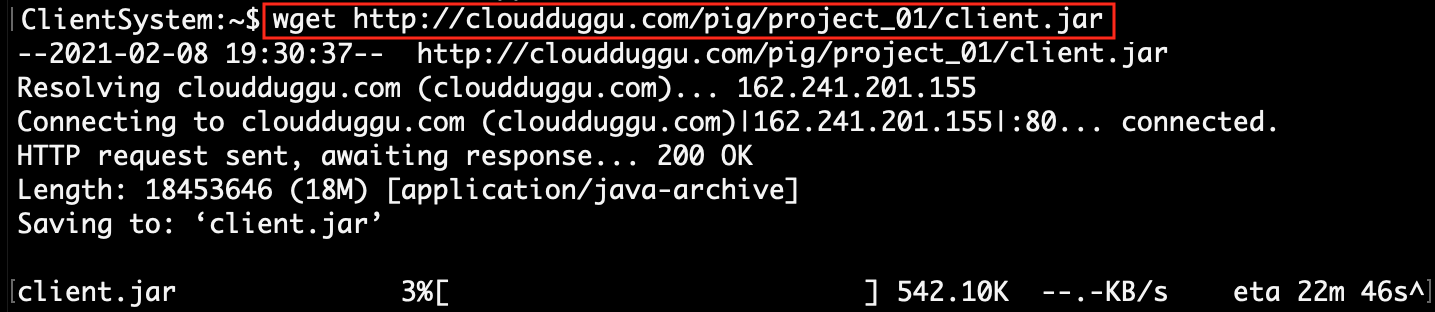

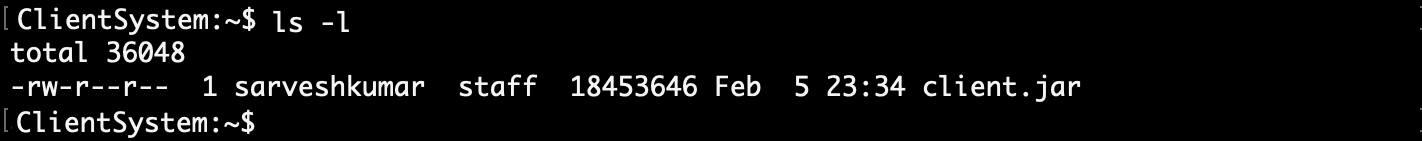

| 1. | Download client.jar in the Client System. Click Here To Download the "client.jar" executable jar file. |

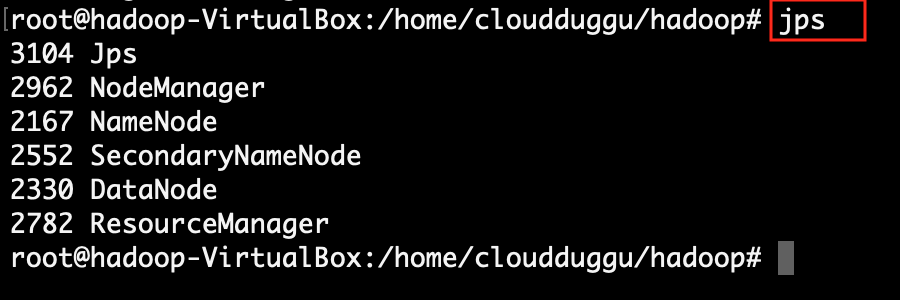

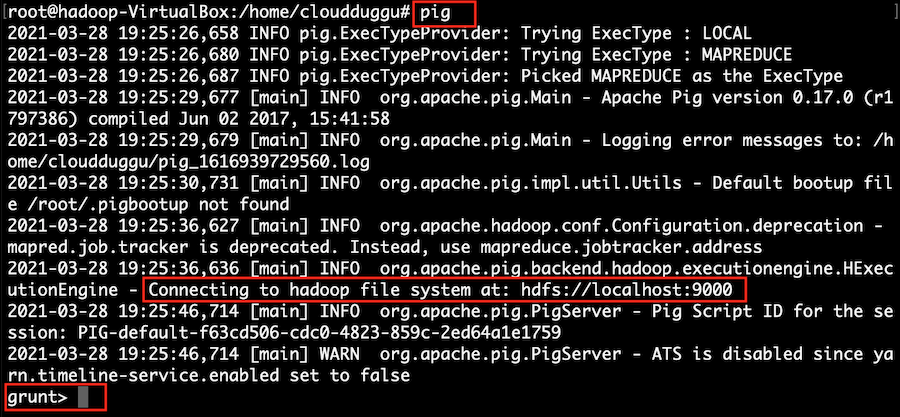

Check all configurations of Hadoop and Pig in the Pig System. |

|

|

|

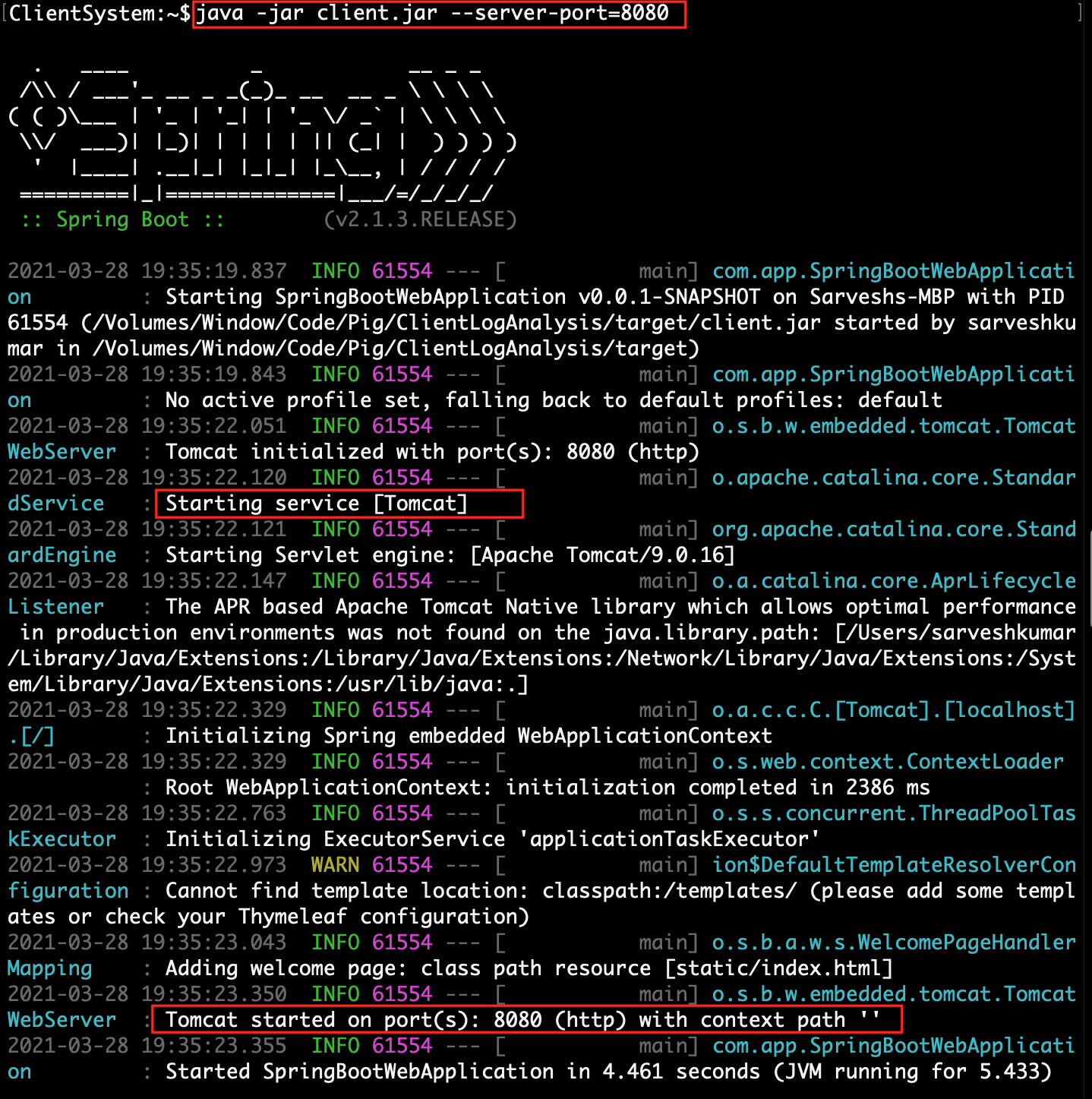

| 2. | Run client.jar in the client system. At execution time pass server port 8080.

Here we can use a different port if the port already uses in the client system.java -jar client.jar --server.port=8080

|

|

|

||

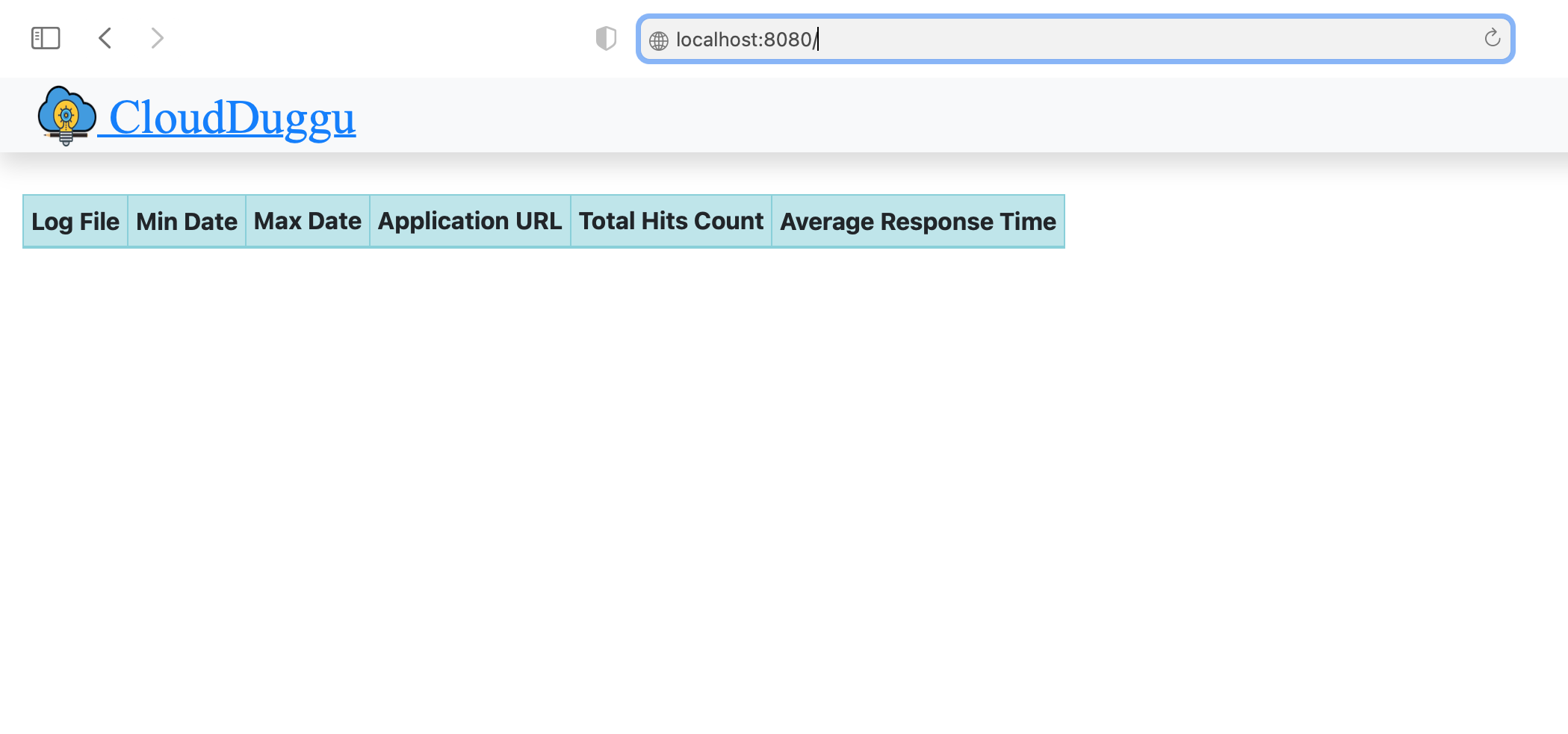

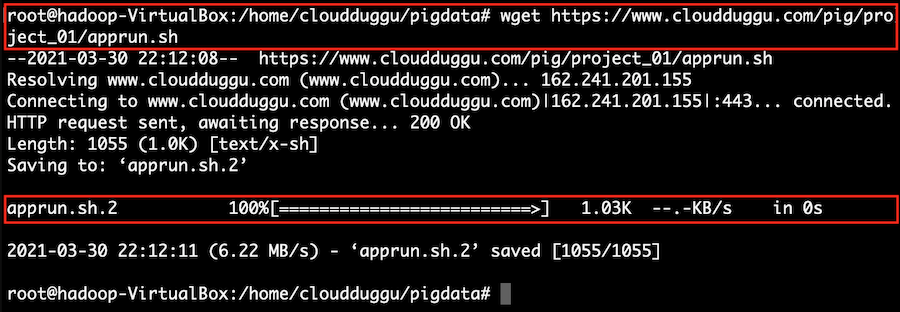

| 3. | Check Client page on the browser using URL: http://localhost:8080 | Download apprun.sh script file in the Pig System. |

|

|

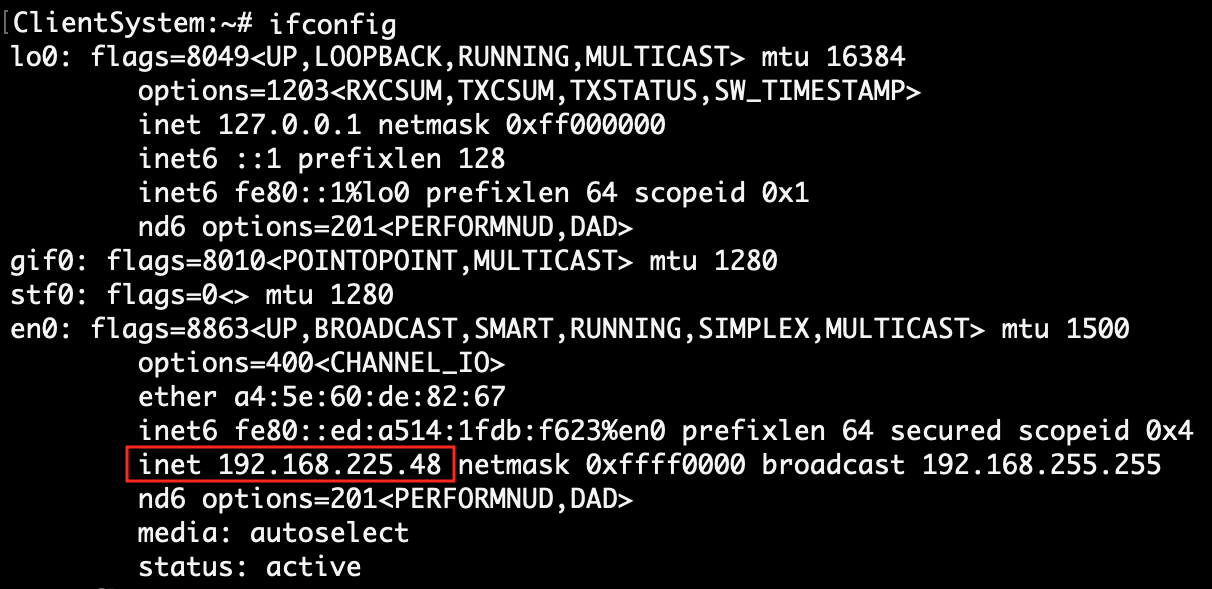

|

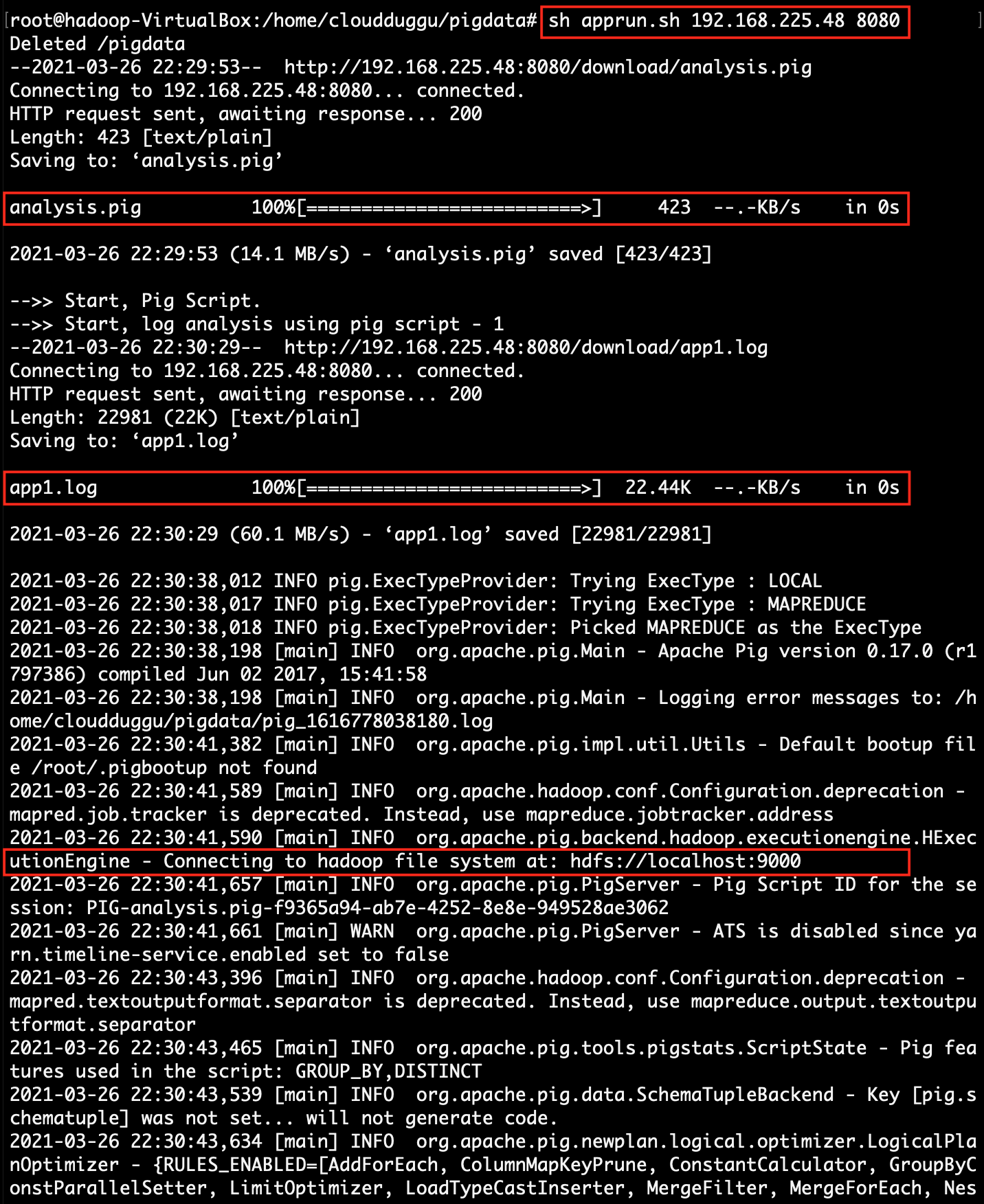

| 4. | Find client system IP, which will be accessible to the Pig system. | Execute the script file apprun.sh. At the time of running the script, pass the client-ip address and client-port number.

sh apprun.sh 192.168.225.48 8080

|

|

|

|

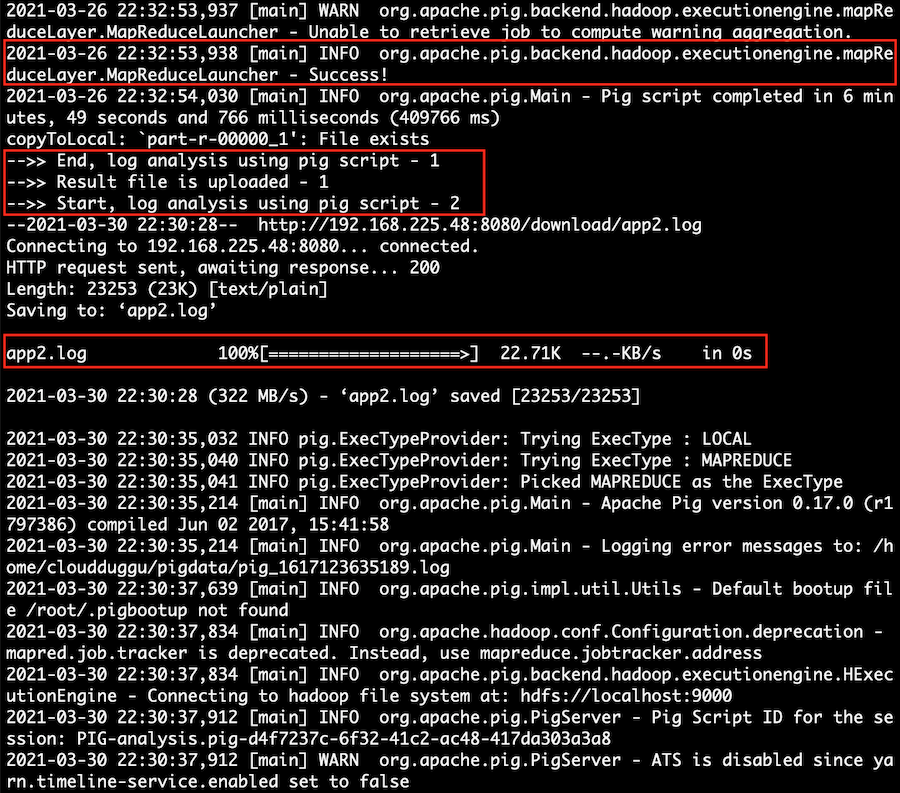

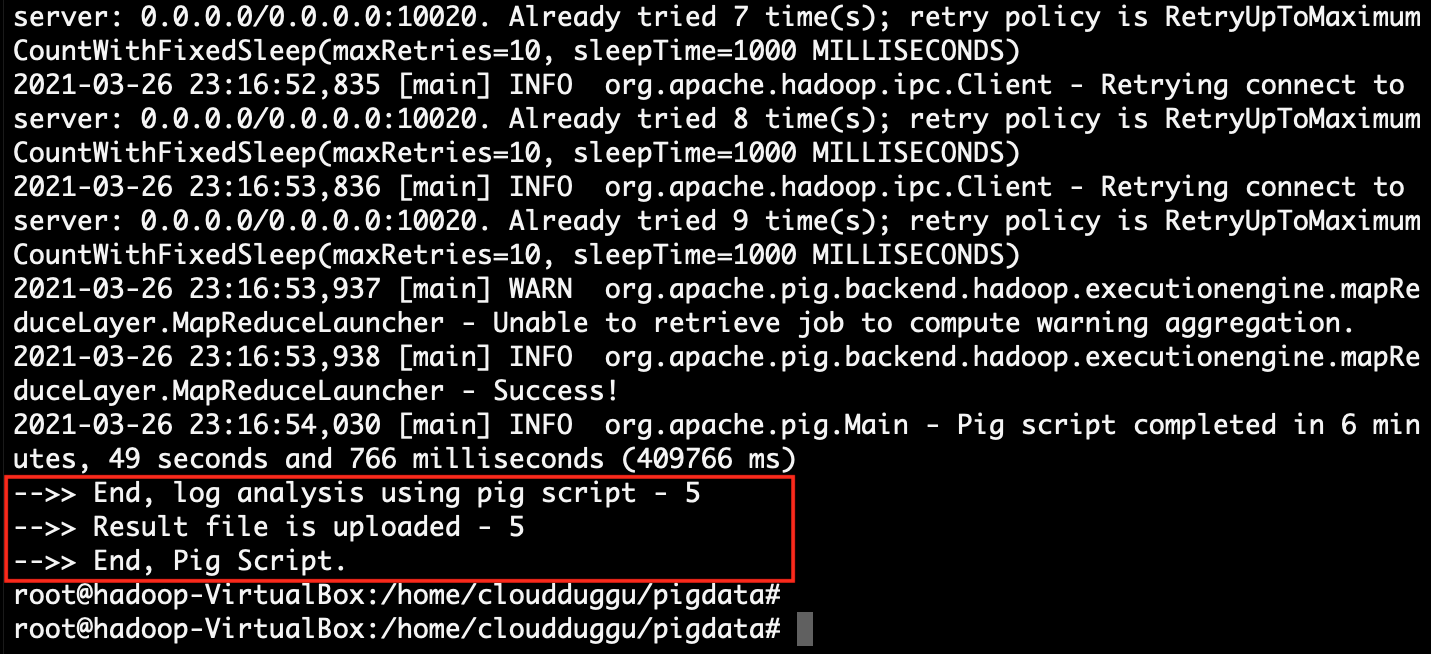

| 5. | The client page will automatically show the result in the bar chart, after processing the pig script in the Pig System. | The Pig System downloads raw logs file from the Client System. After downloading the log file successfully execute the Pig script file on the downloaded log file and send result values to the Client System. After sending the result start the next log file execution. |

|

|

|

| 6. | The client system will automatically show all the results in the Bar chart once the Pig system will complete its processing. | Apprun.sh script has the loop function so once the loop is finished, the execution of files is automatically ended. |

|

|

4. Project Files Description In Details

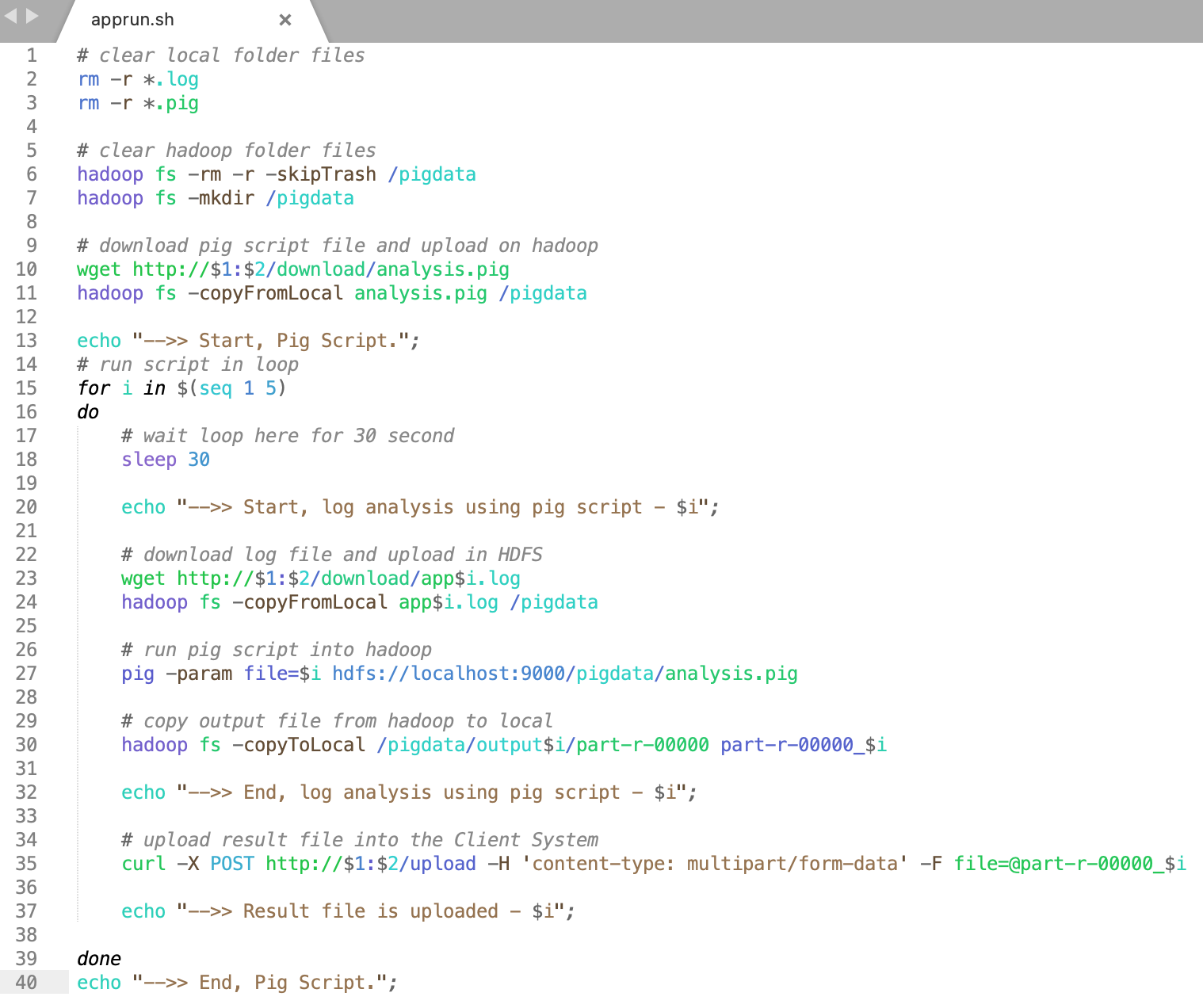

(i). apprun.sh

Using this project shell script (apprun.sh) we can easily download raw log files and execute the Pig script in the Pig system.

The explanation of each line of shell script (apprun.sh) code is mention below.

apprun.sh file has Linux scripts.

(line 2,3) delete all existing files from the local directory of the Pig System.

(line 6) delete the directory of Hadoop HDFS and (line 7) create the directory in Hadoop HDFS.

(line 10) download Pig script files into the local directory of the Pig System.

(line 11) upload Pig script file from local directory to Hadoop HDFS directory.

(line 15) execute the loop in the local Pig System.

(line 23) download log file into local Pig System and (line 24) upload into Hadoop HDFS file system.

(line 27) run Pig script in Hadoop HDFS.

(line 30) download result file from Hadoop HDFS to the local directory of the Pig System.

(line 35) upload result file from the Pig System to the Client System.

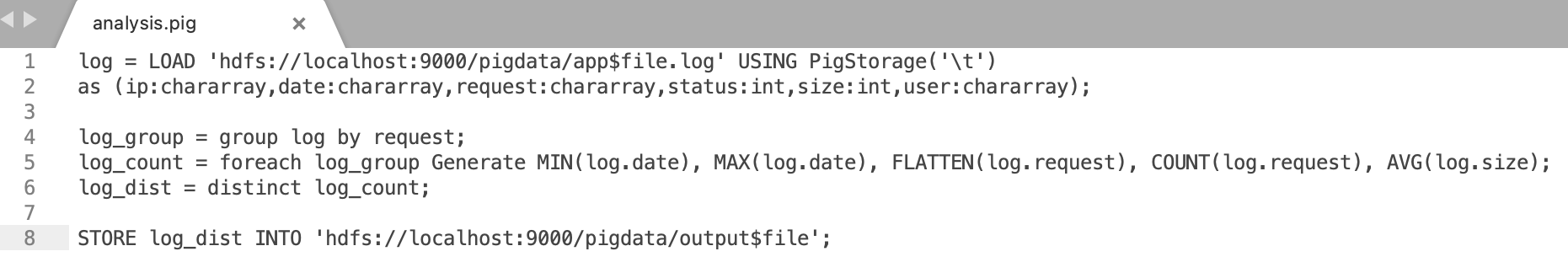

(ii). analysis.pig

Using this Pig script file (analysis.pig) we can easily load log files into the PigStorage and execute logic on that.

The following is the line-by-line explanation of the analysis.pig file.

analysis.pig file has Pig scripts.

(Line 1 & 2) load the raw log file into Hadoop HDFS using PigStorage by tab-separated.

(Line 4) Pig script group the value of Application URL Request.

(Line 5) calculate the counts from each group value.

(Line 6) find distinct value from calculated counts data.

(Line 8) store result output data into Hadoop HDFS.

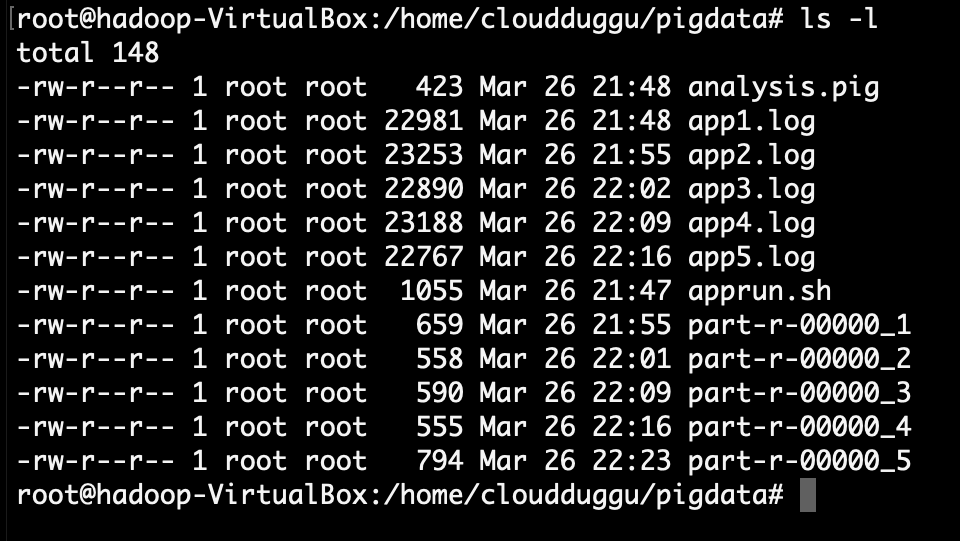

The Pig System folder, after the end of the project finally.

:) ...enjoy the Pig project.