What is Apache Kafka Producer?

Apache Kafka producer is the client which is used to produce message (stream data) to the Kafka cluster into the selected Kafka topics. There are multiple types of producers which exist and each has different ways by how they push stream data. Some produce at a high-velocity with low volume (a message with less payload and high throughput). Some produce low-velocity data at a very high-volume (low throughput with the message size being high).

In a credit card transaction processing system, there will be a client application, possibly an online store that is responsible for sending each transaction to Kafka immediately when a payment is made and there would another application which would be responsible to validate the transaction against certain rules and based on the outcome it will approve or deny. Now the response is captured in Apache Kafka and deliver to the online store as well and this way transaction is marked completed.

In the Apache Kafka Cluster, the client Applications use the Producer API to send the stream of data to the topic.

Let us see a high-level overview of Apache Kafka producer components.

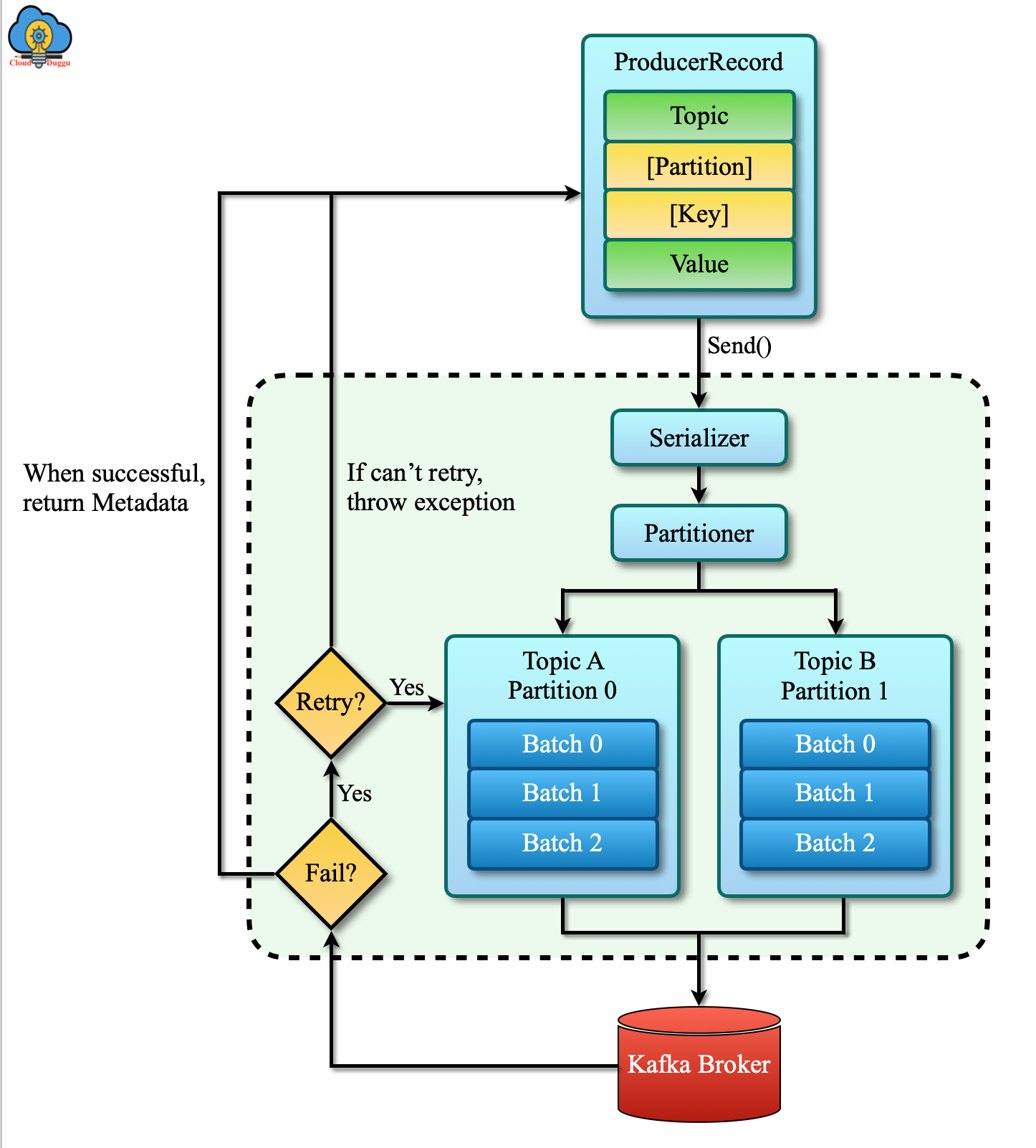

Let us see the steps involved in sending data to Kafka.

- The first step is to create the ProducerRecord in which we start creating messages to Kafka that must include the topic we want to send the record to and a value. Further, we can add the partitions and key values as well.

- In the second step, the producer receives the ProducerRecord and serializes the key and value objects to ByteArrays. After serialization, it is sent over the network to the next component.

- In the third step, the partitioner receives data and check if the partition is specified if yes then partitioner does not perform any operation and return the partition and in case the partition is not defined then based on ProducerRecord key it chooses the partition for us.

- In the fourth step, based on the partition selection the partitioner decides the topic and partition to send records, and then it adds the records to the batch that is sent to a similar partition and topic.

- In the fifth step, A separate thread sends those batches of records to the relevant broker.

- In the sixth step. the broker receives the data and if it is successful then it returns a successful message and incases it is failed to write a message then it returns a failure message.

- In the seventh step, once a producer receives the error message it retries sending the message few more times before it throws the final error.

Apache Kafka Producer Components

Let us see Apache Kafka Producer Components in detail.

1. Kafka Producer Construction

Constructing a producer is the first step to write messages to Kafka. We can set the properties of the producer to fulfill this.

A Kafka producer provides three mandatory properties as mentioned below.

1.1 bootstrap.servers

This property provides a list of host: port pairs of brokers that are used by the producer to establish an initial connection to the Kafka cluster. It does not include all brokers since the producer will get more information after the initial connection but the recommendation is to include two in case once the broker gets down then the producer will still connect with the second one.

1.2 key.serializer

It provides the name of a class that will be used to serialize the keys of the records which will be produced to Kafka. Kafka brokers assume byte arrays as keys and values of messages.

1.3 value.serializer

This property provides the name of a class that will be used to serialize the values of the records which will be produced to Kafka.

2. Serializers

It is an interface that is used to convert an object into a stream of bytes that are used for transmission. Kafka stores and transmits these converted bytes of arrays in its queue. The Kafka client package includes ByteArraySerializer, StringSerializer, and IntegerSerializer, so if we use common types then there is no need to implement our serializers.

3. Partitions

Kafka messages are key-value pairs and most applications produce records with keys so the same keys messages will go on the same partition.